Siri di Apple, Google Assistant, Amazon Alexa e Cortana di Microsoft...

https://goo.gl/wh6Aze

Methodology

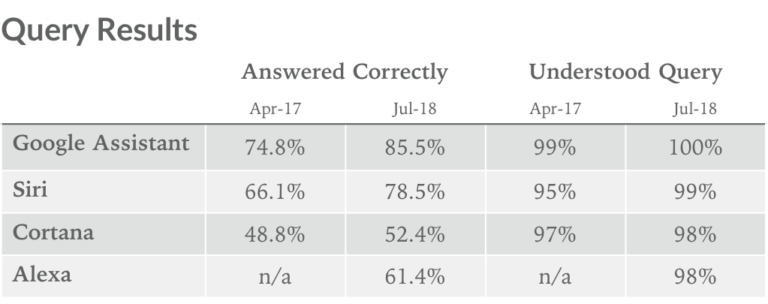

We asked each digital assistant the same 800 questions, and they are graded on two metrics: 1. Did it understand what was being asked? 2. Did it deliver a correct response? The question set, designed to comprehensively test a digital assistant’s ability and utility, is broken into 5 categories:

Local – Where is the nearest coffee shop?

Commerce – Can you order me more paper towels?

Navigation – How do I get to uptown on the bus?

Information – Who do the Twins play tonight?

Command – Remind me to call Steve at 2pm today.

It is important to note that we modified our question set before conducting this round of tests in order to reflect the changing abilities of AI assistants. As voice computing becomes more versatile and assistants become more capable, we must alter our test so that it remains exhaustive.

These changes to our question set caused sequential drops in the percentage of correct answers in the Navigation category, but the total number of correct answers was still up sequentially across the board. We are confident, however, that our new question set better reflects the AI’s ability and is well-positioned for future tests.

Testing was conducted using Siri on iOS 11.4, Google Assistant on Pixel XL, Alexa via the iOS app, and Cortana via the iOS app.

Smart home devices tested include Wemo mini plug, TP-Link Kasa plug, Phillips Hue Lights, and Wemo Dimmer Switch.